Semantic Wayfinding

The Entwined Strands of Thought and Language of Which Novel Conceptions Are Comprised and How the Discipline of Inspiration

As noted elsewhere - in other editions of this newsletter, other publications covering the field of AI, etc. - a common pitfall of Large Language Model (LLM) use is the fallacy that it’s a place to offload the need for human thinking - that we can just set a platform - ChatGPT, Claude, etc. - a task and it’ll not only perform that task, but it will carry out all the cognition that necessarily underlies/precedes the task itself. This does not yield results that are fully wrong every time, but it does lead to vagueness, approximation, inaccuracy, etc. I can pull up Claude, give it a topic and word count, feed it my own previous writing in the general subject area, and request it to produce an edition of this newsletter while I go visit a day spa or hit the M&M Store again or whatever.

The resulting text would almost certainly be a lackluster one - not because mine is such a singular genius or because what I might have to say is rare and wondrous - but because the motive that underlies such an approach is not to seek the most penetrating insight or greatest novelty of expression, but the avoidance of effort. This, we at ZeroWidth have come to conclude, is perhaps the greatest peril implicit in LLM use (our Mindset Myth series of posts explore this topic in greater depth) - this fallacy that it’s a substitute for thinking, that if we plug in some parameters, the platform will make new ideas for us. This will not take place, no matter how conscientious our inputs to any such system, no matter how fervent our wish for it to be so - the resulting text will necessarily be a guesswork pastiche, an approximation of the ideas we’re seeking to express. If we think of cognition as a deck of cards, any LLM, no matter how fast or sophisticated, will demonstrate a near-infinite ability to shuffle that deck, to rearrange its constituent parts in seemingly limitless ways; the LLM cannot generate anything, though - it isn’t inventing new suits or face cards, is not adding to body of card knowledge.

Whether we’re writing with a quill on parchment or via a virtual technological platform, it is the brain activity of a person that renders a text clear or obscure, persuasive or not, lively or dull. We all have access to the same set of words, so it is isn’t these that make writing vital; we all have societal and cultural frames in and from which we operate, so it isn’t these cause writing to be singular - it is the sensitivities and esthetic judgments, the expressive audacity and acuity, etc. of the individual creator that sets one piece of writing apart from the rest. So there is literally NO SUBSTITUTE FOR THE LIVELY AND RESTLESS HUMAN MIND, no product that results from any attempt to match the generative capacities of people can succeed.

So, if the LLM is NOT a form of alternate or substitute cognition, of original generative thought, then what is it for? What utility or value does it provide? Well, at ZeroWidth, the way we’ve come to understand the LLM as a tool for refining, shaping, and strengthening human thought, not as a surrogate or replacement for it. So all the advisory and consulting work we do, all the tools we design and build adopt this concept as central, as indispensable in the adoption and deployment of any LLM solution.

At the recent Berkeley Master of Design (Berkeley MDes) Forum on Emerging Technologies conference, ZeroWidth co-founder John Cain delivered a talk that provided what we hope you’ll find is a useful framework for navigating this complex topic. The subject of the conference was “Interface to Agency” (lineup and overview HERE) and John’s talk centered on a process by which we can formalize/codify the process for design ideation that INCORPORATES but does not rely exclusively upon AI. A process that hinges upon a foundational understanding that it is the brain activity of people that is and must be the animating energy underlying these forms of complex solution-seeking, that any process that’s wholly AI-reliant will effectively be a series of stabs in the dark nested inside a mess of impressive technology.

And while it’s obviously possible to make the argument that human-driven design iteration is also a series of stabs in the dark, the reality (or the hope, anyway) is that the experience and judgment, the discernment and discipline of the trained human mind (whether individual or in collaboration with others) will result in stabs that grow less dark over time, less wrong with each adjustment. The productivity in such a process of iteration dwells in a human capacity to measure, assess, amend, and reconceive, whereas the LLM can merely rearrange. So even though the speed at which it can do so is impressive, we must keep sight of the reality that extracting the value from the output from the LLM hinges upon a human ability to evaluate, rank, reconfigure, and most crucially reconceive.

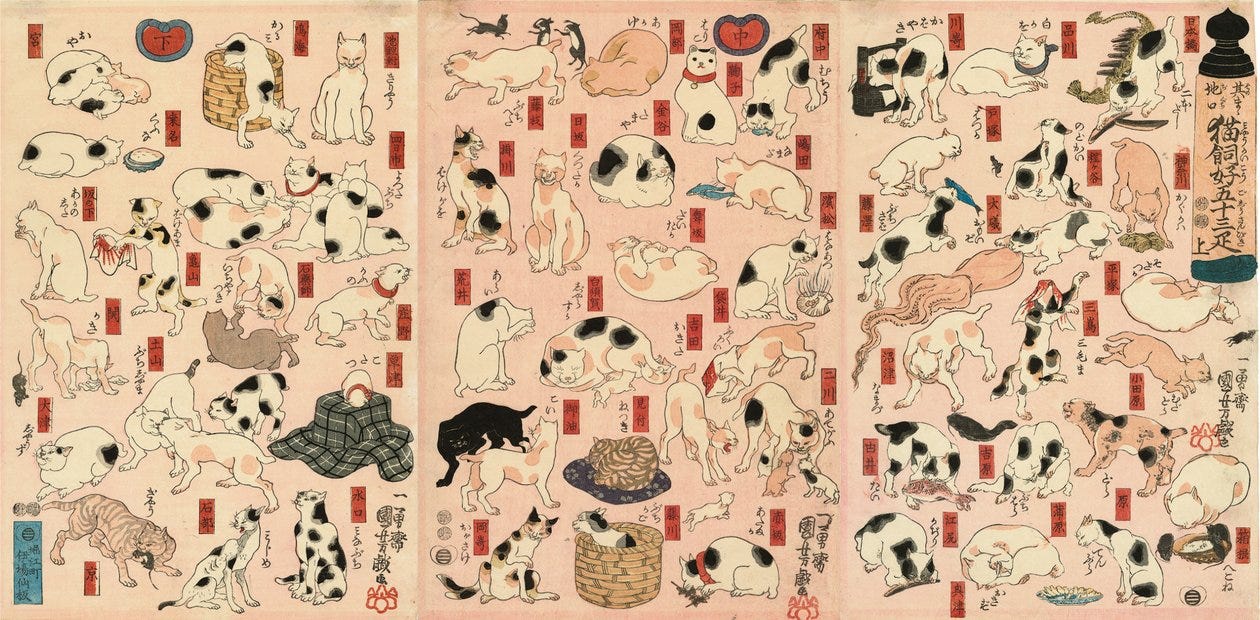

In his talk, John likens the LLM to advances made in the Renaissance era in our ability to render images using linear perspective - techniques that did not mean we were suddenly able to see with greater depth or precision, but that the tools of representation at our disposal were expanded in a transformative way. And subsequently that gains we made in the act of representation caused an expanded cognitive palette for our forms of visual expression. The tools and techniques of better draftsmanship - of creating representations that were more faithful to the directly observed world we around us - were not in themselves the basis for the resulting artistic revolution but rather the human minds that developed an ability to incorporate these into the way they conceived of things.

In the same way that a map is not a territory, or a painting is not a bowl of fruit, a plan is not time travel - it is a representation of an imagined future.

A similar principle of representative power holds where LLM use is concerned. In addition to speed, one of the virtues of the LLM is scale, meaning it’s better able than we are to hold conceptual sprawl. No matter how nimble or capable, the human brain has a finite set of contingencies, consequences, causations, etc. that it can keep sight of at any one time. The LLM, by contrast, is able to hold as many as its data set might contain. You or I might be able to commit to memory and recite a number of sonnets or soliloquies, whereas the LLM can retain the whole of Shakespeare ready to refer to. The LLM excels at drawing upon information at a scale beyond our abilities. The role of the LLM, therefore, is not to think on our behalf, it is to assist us in doing so by helping us navigate the many branching avenues of potentiality while still in the conceptual stage, there’s no reason to fully execute functioning real-world prototypes of every prospective path.

To utilize these powerful new tools to good effect requires firstly that we treat them as such - tools, not colleagues, not our replacements, not some auxiliary brain. Further, if we’re to unlock the full potential of these tools, it will be in tandem with our own minds and capacities - let the LLM do what it’s good at, namely summoning numerous pieces of information in any combination, while we retain the decision-making about what matters.

A fog descending upon us, upon our minds, is a common trope for characterizing the difficulties inherent in clear thinking. Typically, we conceive of the fog as something we must cut our way through in order to arrive at some sensible destination. But what if this is a less interesting way to think about the problem? What if we reconceive of the fog - as not being an impediment to progress, but as a necessary aspect of it? What if we regard the fog of emerging-but-not-yet-resolved thought not as a hindrance to getting where we wish to go, but as an integral and even indispensable part of the territory we’re exploring? What if, in other words, the fog IS the territory?

In order to extract meaning from the swirl and opacity of the fog, we must be able to map our pathways through it - even (or perhaps especially) if these are branching and indirect; to summon what’s apt and useful in among the seeming obscurity, we must capture the insights, questions, and contingencies generated along the way. For the past year, ZeroWidth has been at work to devise a flexible, adaptable, collaborative, customizable suite of tools for precisely this kind of guided cognition - a means of both generating and representing the pathways through and connections between thoughts, a means of giving shape to inspiration, of systematizing innovation.

Before the full launch of it, we invite you to play around with it at no cost to you. This link permits you to learn about and noodle around in and refine a process of your own creation. Give it a whirl and let us know what you think.

https://semfield.ai/

This free access is temporary for the purposes of beta-testing, so hop in now.